System Initiative is a deployment/orchestration tool similar to Ansible, Terraform, etc. The following podcast gives a good overview:

They recently decided to shut down their cloud service. The following video gives some insight into why:

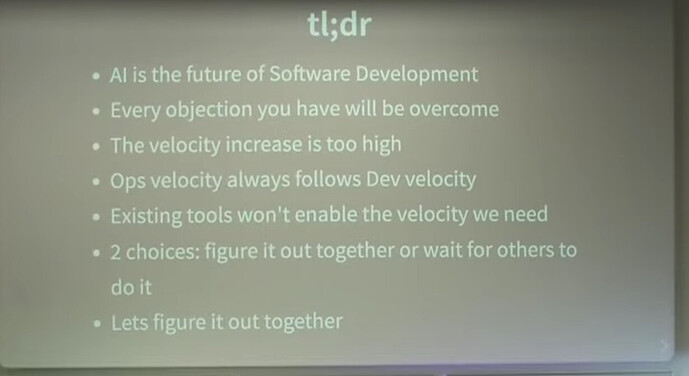

There are a few notable points:

- Something has changed in the last 6 months. AI coding is getting good. This is an example of how AI has completely upset a company’s plans.

- Velocity has increased tremendously in development. Ops will follow development.

- System Initiative was built around a GUI and a new way to version things. Adam commented that users don’t really want to give up on Git and version control and don’t want GUIs. (It appears command line, text formats, and Git are still the future.)

Perplexities summary:

Adam’s talk is about why AI‑native automation is inevitable for software development and operations, how it completely changes velocity, and what ops/infra people need to do to adapt.

Core thesis

- AI is now the future of software development, not hype; its utility and velocity gains are too high for it not to be adopted everywhere.

- When dev velocity jumps, operational velocity must follow, and current tools (infra‑as‑code, Terraform, etc.) already struggle; ops is now the bottleneck.

- The room (infra/ops crowd) must “flip” from skepticism to “this is happening” and figure out the new way together, or someone else will do it for them.

System Initiative story and failure

- He re‑introduces himself (Chef founder, long‑time systems admin, open‑source true believer) and explains that System Initiative spent six years trying multiple radically different infra‑automation product ideas.

- Despite ambitious work, the product never found fit; almost nobody in the audience used or loved it, so he recently had to lay off most of the team and considers that a failure toward both them and the community he wanted to serve.

- A small team decided to “try again” because they still love this problem space and because recent AI progress opened a fundamentally different path.

What changed in AI and dev

- In the last ~6 months, many teams quietly figured out how to use LLMs as real technology (like Postgres or Bash) for serious software work, not just as a gimmick.

- With the right prompting and workflows, multiple frontier models now produce consistently high‑quality, working code; tools like Claude Code matter because they are agents/terminals, not just editors, so you quickly stop reading every line and “let it rip.”

- He gives internal examples: an agent using System Initiative to discover ~2,000 AWS resources, diagnose a production bug, or translate an app from AWS to Azure in minutes—after seeing this, he never wants to build infra any other way.

Velocity and the new dev role

- AI has pushed code output to absurd levels (he cites real people generating ~50k lines/day), which means almost any system you can describe can be rebuilt if you can afford tokens.

- Compared to the DevOps/cloud revolution, this velocity jump is vastly larger; what cloud did for ops, AI does squared for dev, and ops must adapt because all that code still needs to run on real infrastructure.

- The human role collapses to design and planning: you define architecture, standards, and constraints; the agent implements, tests, and even reviews according to that architecture, which you then spot‑check at a structural level rather than reading every diff.

Architecture, quality, and “taste”

- To make AI‑built systems stable, you need explicit software architecture and vocabulary (e.g., domain‑driven design) so agents can follow consistent patterns; they are great at tedious clean design humans usually avoid.

- He distinguishes external quality (does it work, is it secure, do users like it?) from internal quality (architecture, code cleanliness), arguing external quality remains the only real measure; System Initiative failed on that.

- Many beloved engineering principles (DRY, naming/style, lots of taste debates) matter much less when you no longer read most code; what still matters is architecture and constraints that keep large AI‑generated codebases coherent.

Consequences for ops and automation

- The AI wave will not “pop and vanish” because the productivity gain is already realized; if a downturn comes, it is more likely that people who failed to adapt (including in ops) are the ones who lose out.

- Automation systems must become AI‑native, designed for agents as primary users: transparent (every step introspectable), easily extensible in‑line, one‑to‑one with real domains (no human‑centric abstractions that confuse models), richly validated at each step, and spanning the full lifecycle (deploy, debug, observe, remediate).

- Traditional infrastructure‑as‑code is in trouble because it is slow, opaque, too abstract, fragmented across CI/CD and tooling, and encourages arguing about modules and style instead of delivering fast, validated change that agents can drive.

“Swamp” demo direction

- In response, they started a new project (codename “Swamp”) only a few days before the talk, reusing lessons from System Initiative but built under AI‑native assumptions.

- His colleague Keef demonstrates how an AI‑driven workflow extended Swamp to support Proxmox in roughly 10 minutes, then discovered and reasoned about his home‑lab VMs and, with a bit more work, generated full CRUD automation for them—illustrating the kind of agent‑first infra tooling Adam is arguing for.